OpenServ - Reasoning as an Asset Class

From bigger models to better reasoning

The last two years of AI progress have followed one main axis: scale. More parameters, more context, more GPUs. The assumption was that better reasoning simply meant bigger models.

OpenServ’s Reasoning framework challenges that idea. Instead of letting models think out loud in unbounded natural language, it converts problems into compact, machine-readable reasoning graphs. A large model creates the graph once; a smaller, cheaper nano model executes it repeatedly.

In joint research with Coyotiv, this approach delivers up to 99% reasoning accuracy and 30–74x higher performance per dollar compared with standard prompting on benchmarks like AdvancedIF, GSM-Hard, and SCALE. In simple terms, structured reasoning lets smaller models match or exceed models one or two tiers larger. The paper calls this the “OpenServ Reasoning Parity Effect.”

For builders, it matters for two reasons:

Cost: reasoning turns into an operating expense you can optimize, not a tax you pay to whoever runs the biggest models.

Reliability: deterministic graphs drift less than open chain-of-thought, which becomes crucial once agents start moving real money or executing on-chain actions.

This is OpenServ’s first wedge: a model-agnostic reasoning engine that makes agents cheaper and more accurate, regardless of which frontier model wins.

An integrated stack, not another SDK

If this were just a clever prompting trick, it wouldn’t be defensible. Techniques like this spread too quickly.

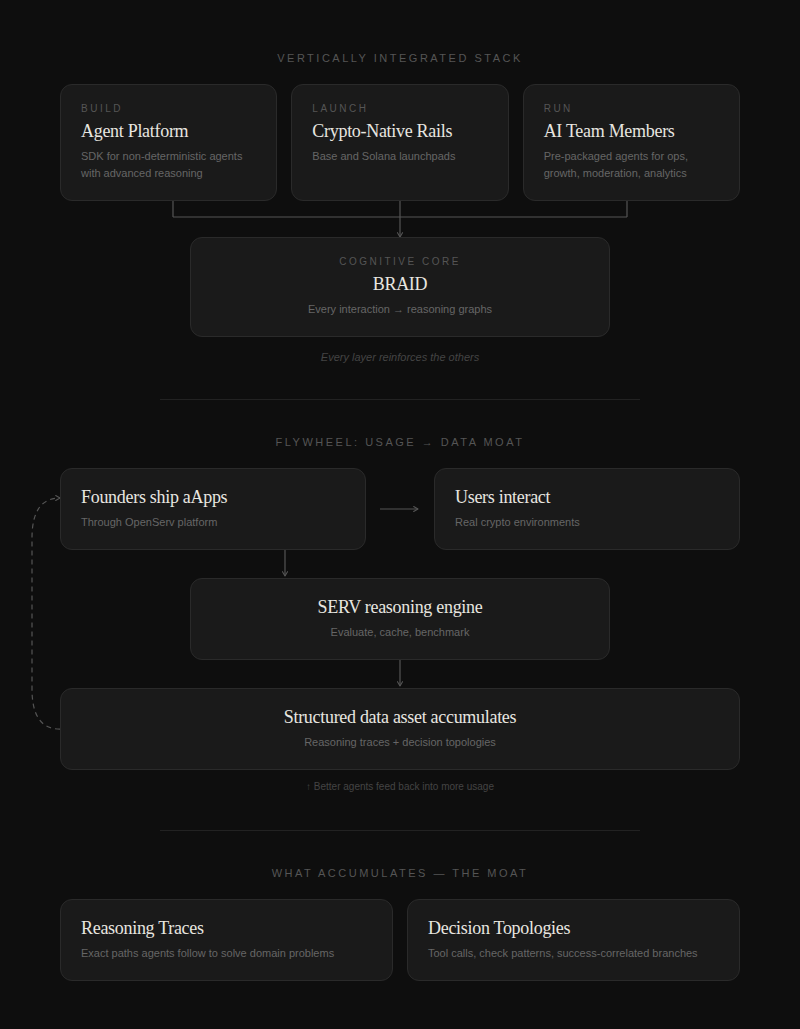

OpenServ is building a vertically integrated stack where its Reasoning framework sits at the cognitive core:

Build: An AI agent platform and SDK for spinning up non-deterministic agents with advanced reasoning, decision-making, and inter-agent collaboration.

Launch: Crypto-native rails for tokenizing projects, connecting to Base and Solana, and distributing agents through Telegram and other high-intent channels.

Run: Pre-packaged AI team members for operations, growth, moderation, and analytics running on the same reasoning engine.

Every layer reinforces the others. When a founder ships an aApp through OpenServ, every user interaction gets processed through its reasoning engine. Each request turns into reasoning graphs that are evaluated, cached, and benchmarked.

Over time, that builds a specific kind of data asset:

Beyond basic logs, the system captures structured reasoning traces that show the exact paths agents take to solve domain problems.

Instead of raw prompts, it records topologies: maps of tool calls, checks and balances, and the decision branches that correlate with successful outcomes.

This is the core of OpenServ’s moat. The depth and diversity of reasoning graphs generated by thousands of live agents operating in real crypto environments. That continuously expanding dataset is what makes OpenServ uniquely defensible.

Incentive alignment vs frontier labs

There’s a deeper strategic angle here: economic alignment.

Frontier labs make money selling tokens, literally the input and output tokens you consume through their APIs. More context, more calls, more “thinking” leads directly to higher revenue. Models that reason longer are great for their P&L, even if that isn’t optimal for users.

OpenServ flips that logic. The OpenServ Reasoning framework compresses reasoning into tight graphs, cutting token use dramatically while maintaining or even improving accuracy. Benchmarks show over 70% fewer tokens used and huge improvements in cost efficiency.

Two asymmetries fall out from this:

Frontier labs have little incentive to push methods that reduce their own token burn. They’ll improve reasoning, but it’ll still favor high utilization.

OpenServ is aligned with developers and users. Its pitch is simple: more correct actions per dollar, no matter which model does the underlying computation.

That makes it a natural second-layer partner, or acquisition target, for any lab that wants to demonstrate best-in-class reasoning without collapsing its own economics.

Where the moat sits

Defensibility shows up in combination and compounding.

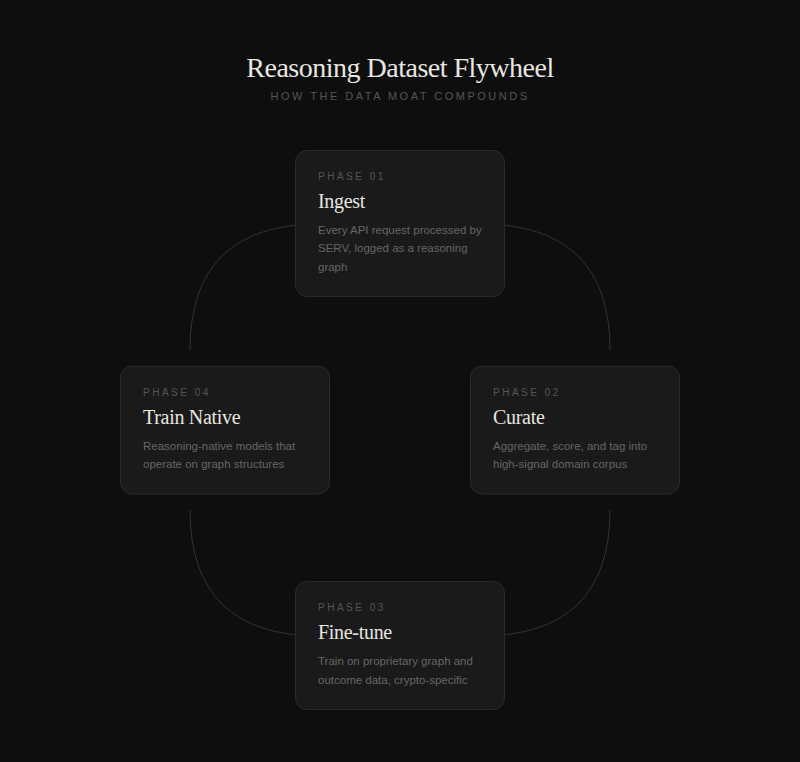

Reasoning dataset flywheel

Phase 1: Every request through the open API is processed by SERV and logged as a reasoning graph.

Phase 2: The system aggregates, scores, and tags these graphs. This creates a high‑signal corpus for specific domains and agent behaviors.

Phase 3: Fine‑tuning models on that proprietary dataset of graphs and outcomes, especially in crypto‑specific areas that the frontier labs tend to under‑serve.

Phase 4: Training reasoning‑native models from scratch that fully understand and operate on those graph structures.

Each stage builds on the last, increasing replication cost.

Positioning in the agentic and frontier model landscape

Zooming out, OpenServ sits right in the middle of the evolving agentic stack.

Below it are the frontier models pushing raw capability.

In the middle is the OpenServ Reasoning framework, turning unstructured prompts into structured reasoning graphs.

Above are agent frameworks and apps built by founders, from trading bots to community managers.

Around it lies the crypto infrastructure that these agents need to interact with: chains, wallets, DEXs, and social protocols.

Most players focus on one layer, either infrastructure or applications. OpenServ is deliberately full-stack, an AI co-founder for crypto spanning cognition, infra, tokenization, and go-to-market. It’s high variance, but if it works, that’s where most of the long-term value will concentrate.

Working thesis on OpenServ

Macro: The constraint for the next AI cycle isn’t raw IQ, it’s economically viable, auditable reasoning. Structured reasoning layers that sit on top of existing models will likely define the next major wave.

Tech: The OpenServ Reasoning framework quantifiably boosts performance per dollar, enabling smaller models to compete with or beat larger ones. Efficiency becomes a strategic resource.

Platform: By embedding OpenServ’s Reasoning within a crypto-native agent platform, OpenServ accumulates proprietary reasoning graphs and behavioral data that could power future reasoning-native models.

Token flywheel: The SERV token integrates payments, staking, and rewards. If agentic apps scale, SERV evolves into the economic spine of a new AI-crypto ecosystem.

Strategic optionality: As frontier labs recognize the economics of reasoning, acquiring a platform like OpenServ, with a proven reasoning engine, operating agents, and crypto distribution, becomes an obvious path forward.

Why this could look inevitable in hindsight

Five years from now, it may seem obvious that reasoning efficiency, not raw intelligence, was the layer where real value accrued. History tends to reward architectures that convert brute-force capability into leverage, just as cloud abstracted hardware and browsers abstracted operating systems. The OpenServ Reasoning framework does that for cognition.

If the economy of AI agents moves toward cost-sensitive workloads and auditable autonomy, OpenServ’s position looks almost predetermined. It converts unstructured token burn into structured, recomposable reasoning; and it ties that to a financial system that can fund, measure, and reward productive agents.

In hindsight, the idea that reasoning itself would become an investable layer will feel like it was the only logical outcome.